ChatGPT has captivated millions of users with its eloquent responses, but could this artificial intelligence have unintended consequences for the planet?

This article explores ChatGPT’s environmental impact, including its carbon emissions, energy consumption, and resource usage during development and deployment.

As with any emerging technology, balancing continued innovation and democratization with ecological stewardship is key to ensuring AI like ChatGPT ultimately benefits society and the planet.

Is ChatGPT Bad for the Environment?

Yes, current research suggests ChatGPT has a sizable carbon footprint and consumes substantial energy, water, and resources compared to standard software.

Its vast data needs, extensive computing power demands, infrastructure requirements, and indirect electricity usage have notable environmental impacts that must be addressed responsibly.

Key Points

- Training and running ChatGPT’s advanced AI emits high greenhouse gas emissions.

- The energy demands risk exacerbating climate change and electricity constraints.

- Vast data needs to magnify embedded resource consumption.

How Much Training Data Does ChatGPT Require?

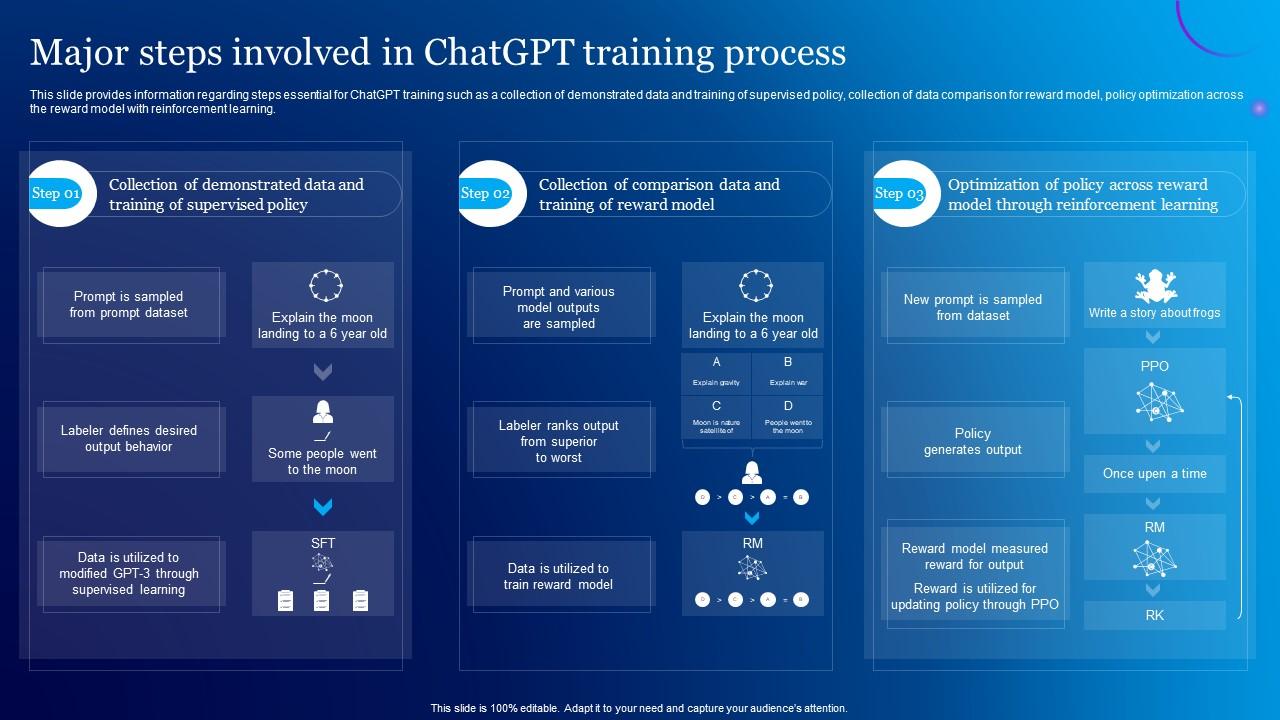

ChatGPT was developed by OpenAI based on their GPT-3 natural language processing model.

Training the original GPT-3 required ingesting hundreds of billions of words from websites, books, Wikipedia, and other text sources.

ChatGPT then underwent further supervised training and fine-tuning using human conversation data.

This massive volume of required training data contributes to ChatGPT’s substantial compute, energy, and emissions footprint.

What Type of Infrastructure Powers ChatGPT?

The servers running advanced neural network AI like ChatGPT require cutting-edge data center infrastructure.

ChatGPT’s foundation GPT-3 model was trained on thousands of NVIDIA V100 GPUs and infrastructure from Microsoft and Amazon Web Services.

Deploying ChatGPT also necessitates continuous access to hundreds of GPU servers.

State-of-the-art data centers with advanced cooling, power distribution, and capacity are required to support intensive AI systems like ChatGPT.

How Does ChatGPT Compare to Other Software?

By most estimates, ChatGPT’s environmental impact significantly exceeds standard software applications.

Carbon emissions from cars are bad enough, and one study found the compute power required to train GPT-3 emitted over 500 tons of carbon – equal to the lifetime emissions of five average American cars.

OpenAI acknowledges ChatGPT’s climate impact but is working to mitigate it through carbon offsets.

Some experts believe language AI could become greener over time as algorithms and hardware continue advancing.

But currently, few technologies rival cutting-edge AI’s data, compute, and energy appetite.

Could AI Like ChatGPT Worsen the Microchip Shortage?

The advanced GPUs and semiconductors needed to run systems like ChatGPT are the same chips in short supply globally.

As companies race to deploy ever-larger language models, demand for processing capacity and infrastructure expands.

Data center construction also requires concrete, steel, and land.

However, optimizing software and sharing models helps minimize hardware needs.

Overall, the voracious computing power appetite of AI risks exacerbating materials and microchip scarcity, necessitating responsible deployment.

Does ChatGPT Use a Lot of Electricity?

Yes, training and running ChatGPT’s natural language algorithms consume massive amounts of electricity.

One estimate found the energy required to train a large language model equates to a trans-American flight.

Continually querying and refreshing such a vast model during deployment also requires energy-intensive GPU servers.

Companies developing AI should procure renewable energy to mitigate emissions.

But the unchecked expansion of power-hungry models risks overburdening grids.

Operating ChatGPT sparingly helps reduce indirect electricity consumption and associated carbon emissions.

Could AI Like ChatGPT Worsen Climate Change?

Through energy-intensive training and infrastructure demands, ChatGPT contributes substantially to technology-related emissions exacerbating climate change currently.

OpenAI claims to purchase carbon offsets, but high resource consumption persists.

The uncontrolled proliferation of exponentially larger AI models would further concentrate emissions and environmental damage.

However, optimizing code, sharing models, and running AI on renewable-powered, minimal hardware can curb impacts.

Overall, conscientious development and the use of ChatGPT and related technologies help uphold ecological responsibility.

Why is ChatGPT Bad for the Environment?

ChatGPT is considered environmentally taxing for several key reasons:

Its foundation model, GPT-3, required training on massive amounts of text data, demanding huge computing power.

This training alone was estimated to emit CO2 equal to dozens of trans-American flights.

Continually running and refreshing a complex natural language model like ChatGPT requires rows of energy-intensive GPUs in data centers, consuming substantial electricity.

Training ever-larger AI models consume scarce computing chips, exacerbating demand and resource use.

Expanding power-hungry data centers also increases concrete and land footprints.

Companies racing to deploy ChatGPT and similar AI must use renewable energy to mitigate the indirect emissions from electrical consumption.

Unchecked expansion of power-hungry models risks burdening grids.

Overall, while innovative, the immense data needs, computing demands, infrastructure requirements, and resulting electricity usage of advanced AI like ChatGPT incur heavy environmental costs that must be reduced.

What is the Carbon Footprint of ChatGPT?

As a new technology, estimations of ChatGPT’s carbon footprint are limited.

Its foundation model GPT-3 was estimated to emit over 500 tons of CO2 for training alone – equal to 330 roundtrip flights from New York to San Francisco.

Operating ChatGPT on GPUs continuously consumes substantial electricity, but emissions depend on the energy source.

One estimate found ChatGPT’s daily carbon footprint equivalent to 0.2% of the average Dane’s annual emissions.

However, optimizing code, sharing model architectures, and committing to renewable energy can greatly reduce ChatGPT’s climate impact over time.

Is ChatGPT Energy Efficient?

Currently, ChatGPT is not considered energy efficient compared to traditional software.

As a massive natural language model requiring advanced neural networks and significant computing power, it demands rows of GPUs running constantly to query and refresh outputs.

Training ever-larger models requires exponentially more intensive compute.

Recent estimates found training a language AI can emit as much carbon as five cars in their lifetimes.

While not inherently energy efficient now, optimizing ChatGPT’s code and running it exclusively on renewable energy can improve its ecological profile, as can conscientiously use minimizing unnecessary queries.

Are NFTs Harmful to the Environment?

Yes, creating and transacting blockchain-based NFTs (non-fungible tokens) consumes massive computing power and electricity, resulting in huge carbon footprints and climate impacts.

Estimates suggest minting and trading NFTs causes 20-100 times more emissions than traditional online transactions.

Their energy-intensive crypto mining origin and computing needs make most NFTs detrimental to environmental sustainability currently.

However, innovators are transitioning NFT platforms like Ethereum to less resource-intensive models, though environmental stewardship remains a concern in this nascent space.

Key Takeaway:

- While innovative, ChatGPT’s immense training data needs, computer processing demands, infrastructure requirements, and electricity consumption currently incur steep environmental costs that must be urgently addressed.

FAQ

What is Compute Power?

Compute power refers to the speed, processing capabilities, and overall performance of computers and hardware like GPUs and semiconductors that run software code and models.

How Does Cloud Computing Impact the Environment?

Large data centers for cloud computing use tremendous energy and water for cooling, necessitating renewable sourcing. Distributed computing reduces duplication.

Are NFTs Harmful to the Environment?

Yes, the blockchain computing underpinning NFTs consumes immense energy, resulting in high emissions and environmental harm.

GreenChiCafe shares the latest in sustainability innovations and eco-tech.

Visit our site to learn more about green computing initiatives.